Published: October 25, 2023

With the recent explosion and increase in the popularity of AI, many have come up with different theses as to where AI and crypto intersect. These innovations hold the potential to revolutionize various aspects of our digital lives, from managing digital assets to preserving intellectual property and combating fraud. Notably, this convergence has given rise to two prominent trends:

Blockchain’s previous AI applications largely concentrated on infrastructure, enabling AI/ML model storage and GPU rentals. This led to trends like token-incentivized reinforcement learning, zkML, and blockchain-based identity registries to combat deep fakes. Simultaneously, a parallel trend is gaining momentum: protocols incentivizing intelligence.

In this report, we delve into the intersection of AI and crypto, with a focus on Bittensor and the $TAO token, exploring their roles in the Peer-to-Peer Intelligence Marketplace and the rise of a Digital Commodity Marketplace.

Taking advantage of the most recent Revolution Upgrade that took place on October 2 we also provide a historical overview, sector outlook, competitive analysis, and insights into the value proposition of $TAO.

Bittensor is an open-source protocol with a core mission: to drive AI development through a blockchain-driven incentive structure. In this ecosystem, contributors are rewarded with $TAO tokens for their efforts.

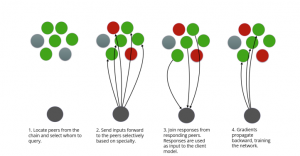

Bittensor functions as a mining network, utilizing token incentives to encourage participation while upholding principles of openness and decentralization. Within this network, multiple nodes host machine learning models, collectively contributing to the pool of intelligence. These models play a crucial role in analyzing extensive text data, extracting semantic meaning, and generating valuable insights across various domains.

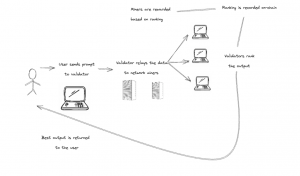

For users, essential functionalities encompass the ability to query the network for access to intelligence, engage in miners and validators for $TAO token mining, and oversee their wallets and balances.

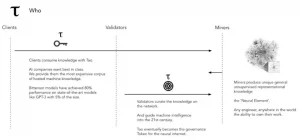

Bittensor’s network relies on contributions from a diverse range of stakeholders, including miners, validators, nominees, and consumers. This collaborative approach ensures that the best AI models rise to the top, enhancing the quality of AI services offered by the network.

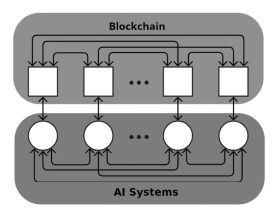

The supply side has two layers: AI (Miners) and blockchain (Validators).

On the demand side, developers can build applications on top of Validators, leveraging (and paying for) use-case-specific AI capabilities from the network.

The product of coordination between the stakeholders listed above results in a network that promotes the best models for a given use case. With anyone able to experiment, it’s hard for closed-source businesses to even compete.

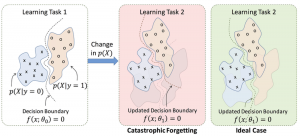

One of the most common misconceptions is that the network supports ML training. In its current state, Bittensor exclusively supports inference, which is the process of drawing conclusions and providing responses based on evidence and reasoning. Training, on the other hand, is a distinct process that involves teaching a machine-learning model to perform a task. This is achieved by feeding the model a substantial dataset of labeled examples, allowing it to learn patterns and associations between data and labels. Inference, meanwhile, utilizes a trained machine learning model to make predictions on new, unseen data. For instance, a model trained to classify images can be employed for inference to determine the class of a new, previously unseen image.

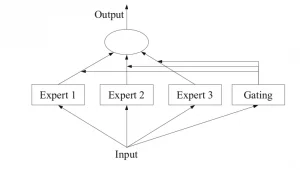

Hence, it’s important to note that Bittensor doesn’t execute on-chain ML but functions more like an on-chain oracle or a network of validators that connects and orchestrates off-chain ML nodes (miners). This configuration creates a decentralized mixture-of-experts (MoE) network, an ML architecture that blends multiple models optimized for different capabilities to form a more robust overall model.

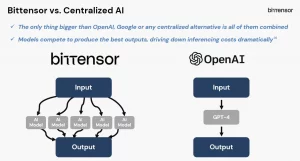

Bittensor’s Peer-to-Peer Intelligence Marketplace is a pioneering concept in the field of AI development, offering a decentralized and permissionless platform that stands in stark contrast to more closed models like OpenAI or Google’s Gemini.

This marketplace is designed to foster competitive innovation, drive the growth of the AI industry, and make AI accessible to a global community of developers and users. Any form of value can be incentivized — a protocol for incentivizing/creating a fair market for any digital commodity.

In other words, the protocol embodies a peer-to-peer approach to the exchange of machine learning capabilities and predictions among participants within the network. It facilitates the sharing and collaboration of machine learning models and services, promoting a collaborative and inclusive environment where both open-source and closed-source models can be hosted.

Bittensor is unique in the sense that it lays the foundation for the emergence of a Digital Commodity Marketplace, effectively transforming machine intelligence into a tradeable asset. At its core, the protocol establishes a marketplace where machine intelligence is commoditized.

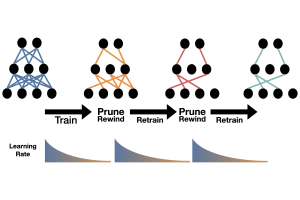

Much like a genetic algorithm, Bittensor’s incentive system continuously evaluates miner performance and makes selections or recycles miners over time. This dynamic process ensures that the network remains efficient and responsive to the evolving landscape of AI development.

In the Bittensor intelligence marketplace, value generation follows a dual approach:

It’s worth noting that Bittensor doesn’t solely reward raw performance but places emphasis on the generation of the most valuable “signal.” This means that the reward system prioritizes the creation of information that offers substantial benefits to a broad audience, ultimately contributing to the development of a more valuable commodity.

As a standalone layer1 blockchain, Bittensor is powered by the Yuma consensus algorithm. It is a decentralized, peer-to-peer consensus algorithm that empowers the equitable distribution of computational resources across a network of nodes.

Yuma operates on a hybrid consensus mechanism combining proof-of-work (PoW) and proof-of-stake (PoS) elements. Nodes within the network perform computational work to validate transactions and create new blocks. This work is then validated by other nodes, and successful contributors are rewarded with tokens. It is the PoS component that encourages nodes to hold tokens, aligning their interests with the network’s stability and growth.

Compared to conventional consensus mechanisms, this hybrid model offers several advantages. On the one hand it avoids the excessive energy consumption often linked to Proof of Work (PoW), addressing environmental concerns. On the other hand, it circumvents the centralization risks seen in Proof of Stake (PoS), preserving network decentralization and security.

The Yuma consensus mechanism stands out for its ability to distribute computational resources across an extensive network of nodes. This approach has far-reaching implications, as it enables the handling of more complex AI tasks and the processing of larger datasets with ease. As the network incorporates additional nodes, it naturally scales to accommodate increasingly substantial workloads.

In contrast to traditional centralized AI applications that rely on a single server or cluster, Yuma-powered applications can be distributed across a network of nodes. This distribution optimizes computational resources, making it possible to tackle intricate tasks while mitigating the risks associated with single points of failure and security vulnerabilities.

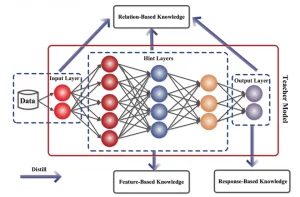

Knowledge distillation is a foundational concept within the Bittensor protocol, fostering collaborative learning among network nodes to enhance performance and accuracy. Similar to how neurons in the human brain work together, knowledge distillation enables nodes to collectively improve within the network.

This process involves the exchange of data samples and model parameters among nodes, leading to a network that self-optimizes over time for more precise predictions. Each node contributes to a shared pool, ultimately improving the network’s overall performance, making it faster and better suited for real-time learning applications like robotics and self-driving cars.

Crucially, this method mitigates the risk of catastrophic forgetting, a common challenge in machine learning. Nodes retain and expand their existing knowledge while incorporating new insights, enhancing the network’s resilience and adaptability.

By distributing knowledge across multiple nodes, the Bittensor TAO network becomes more resilient against disruptions and potential data breaches as well. This robustness is particularly vital for applications dealing with high-security and privacy-sensitive data, such as financial and medical information (more on privacy later).

Taking innovation a step further, the Bittensor network introduces the concept of a decentralized Mixture of Experts (MoE). This approach harnesses the power of multiple neural networks, each specializing in different aspects of data. When new data is introduced, these experts collaborate to produce more accurate collective predictions than any individual expert could achieve alone.

The consensus mechanism employed combines deep learning with blockchain consensus algorithms. Its primary objective is to distribute stake as an incentive to peers who contribute the most informational value to the network. In essence, it rewards those who enhance the network’s knowledge and capabilities.

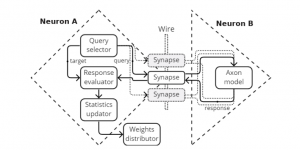

At its core, the Bittensor protocol consists of parameterized functions, often referred to as neurons. These neurons are distributed in a peer-to-peer fashion, with each holding zero or more network weights recorded on a digital ledger. Peers actively engage in ranking one another, training neural networks to determine the value of their neighboring nodes. This ranking process is pivotal in assessing the contributions of individual peers to the network’s overall performance.

Scores generated through this ranking process accumulate on a digital ledger. High-ranking peers receive monetary rewards, gaining additional weight in the network. This establishes a direct connection between a peer’s contributions and their rewards, promoting fairness and transparency within the network.

This approach presents a marketplace where intelligence is priced by other intelligence systems in a peer-to-peer manner across the internet. It incentivizes peers to continually enhance their knowledge and expertise.

To ensure equitable distribution of rewards, Bittensor employs Shapley values, a concept borrowed from cooperative game theory. Shapley values offer a fair and efficient way to allocate rewards among network peers based on their contributions. This alignment of incentives with contributions motivates nodes to act in the network’s best interests, enhancing security and efficiency while driving continual improvement.

Bittensor’s core mission centers around fostering innovation and collaboration in the AI space through a decentralized framework. This framework enables the rapid expansion and sharing of knowledge, creating an ever-growing and unstoppable library of information. In this marketplace, developers are empowered to monetize their AI models and provide valuable solutions to businesses and individuals.

The vision of Bittensor extends to a future where AI models are readily accessible and deployable across a wide range of industries. This accessibility fuels advancements and unlocks new possibilities, bridging the gap between AI capabilities and real-world applications.

Much like prominent global AI models such as Chat GPT, Bittensor models generate ‘representations’ based on a universal dataset. To evaluate model performance, Fisher’s information is utilized, estimating the impact of removing a node from the network, akin to the loss of a neuron in the human brain.

Beyond model ranking, Bittensor places a strong emphasis on interactive learning. Each model actively engages with the network, seeking interactions with other models, similar to a DNS lookup. Bittensor functions as an API that facilitates data exchange among these models, fostering collaborative learning and knowledge sharing – using both open-source and closed-source models.

Using Yuma consensus to ensure that everyone plays by the rules, the ecosystem acts as a driving force for open-source developers and AI research labs, offering financial incentives to enhance open foundational models.

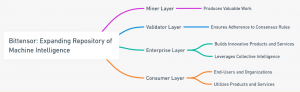

In essence, Bittensor functions as a constantly expanding repository of machine intelligence. This is achieved by bringing together 4 different layers:

Bittensor was founded in 2019 by two AI researchers, Jacob Steeves and Ala Shaabana (and one pseudonymous whitepaper author, Yuma Rao) who were searching for a way to make AI compoundable. They soon realized crypto could be the solution — a way to incentivize and orchestrate a global network of ML nodes to train & learn together on specific problems. Incremental resources added to the network increase overall intelligence, compounding on work done by previous researchers & models.

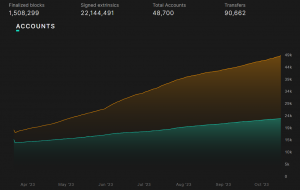

Bittensor’s journey began with the launch of ‘Kusanagi’ in January 2021, marking the activation of the network and allowing miners and validators to start earning the first $TAO rewards. However, this initial iteration encountered temporary halts due to consensus issues. In response, Bittensor forked ‘Kusanagi’ into ‘Nakamoto’ in November 2021.

On March 20, 2023, a significant milestone was reached as ‘Nakamoto’ was once again forked, this time evolving into ‘Finney.’ The purpose of this upgrade was to enhance the kernel code’s performance.

Notably, Bittensor initially aimed to become a parachain on Polkadot, securing a parachain slot through a successful auction in January. However, the decision was made to utilize its own standalone L1 blockchain built on Substrate instead of relying on Polkadot due to concerns related to Polkadot’s development speed.

Bittensor has been on mainnet for over a year, and its focus has been on pioneering research and laying the groundwork for its future potential. Here’s an overview of the current status and the reasons why business use cases have not yet been built on top of its validators:

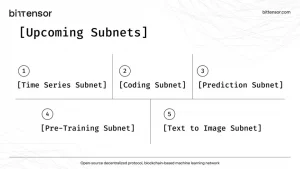

With the latest Revolution upgrade, Bittensor opened the ability for anyone to create a subnetwork that specializes in a specific type of application. For example, Subnet 4 uses JEPA (Joint Embedding Predicted Architecture), which is an AI approach pioneered by Meta’s Yann LeCun to handle a variety of inputs and output types such as video, images, and audio in a single model.

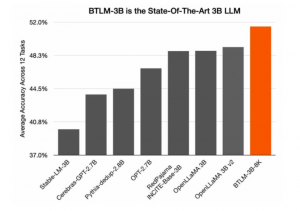

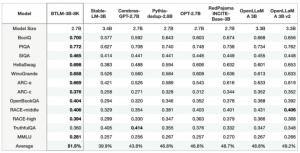

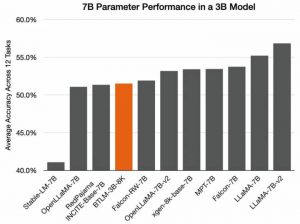

Another remarkable achievement is Cerebras, BTLM-3B-8K (Bittensor Language Model, a 3B parameter model that makes it possible to run highly accurate and performant models on mobile devices, making AI significantly more accessible. BTLM-3B-8K is available on Hugging Face with an Apache 2.0 license for commercial use.

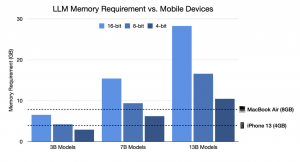

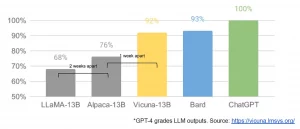

Large GPT models typically have over 100B parameters, requiring multiple high-end GPUs in order to perform inference. However, the release of LLaMA from Meta gave the world high-performance models in as little as 7B parameters, making it possible to run LLMs on high-end PCs.

But even a 7B parameter model quantized to 4-bit precision does not fit in many popular devices such as the iPhone 13 (4GB RAM). While a 3B model would comfortably fit on almost all mobile devices, prior 3B sized models substantially underperformed their 7B counterparts.

BTLM strikes a balance between model size and performance. With 3 billion parameters, it offers a level of accuracy and capability that significantly outperforms previous 3B-sized models.

When looking at individual benchmarks, BTLM scores highest in every category with the exception of TruthfulQA.

Not only does BTLM-3B outperform all 3B models, it also performs in-line with many 7B models.

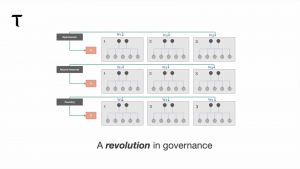

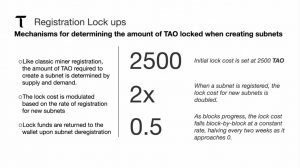

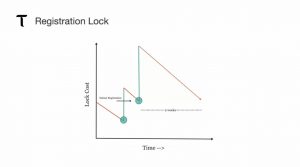

The Bittensor Revolution Upgrade, launched on October 2nd, signifies a significant milestone in the development of Bittensor, ushering in substantial changes to its operational structure. Central to this upgrade is the introduction of “subnets,” a groundbreaking concept that grants developers unprecedented autonomy in shaping their incentive mechanisms and establishing markets within the Bittensor ecosystem.

A key feature of this upgrade is the introduction of a specialized programming language designed specifically for crafting incentive systems. This innovation empowers developers to create and implement their incentive mechanisms on the Bittensor network, utilizing its extensive pool of intelligence to tailor markets to their specific requirements and preferences.

This upgrade also represents a notable departure from a centralized model, where a single foundation controls all aspects of the network, toward a more decentralized framework. Various individuals or groups now have the opportunity to own and manage subnets.

With the introduction of “subnets,” anyone can now create their own subnetworks and define their incentive mechanisms, fostering a broader range of services within the Bittensor ecosystem. This shift promotes diversity and decentralization within the network, aligning with the principles of openness and collaboration that underpin Bittensor’s mission.

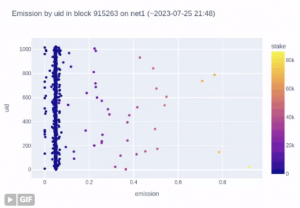

Furthermore, subnets will compete for emissions by garnering consensus from delegates in the new “route network,” introducing a competitive element that can drive innovation and resource allocation.

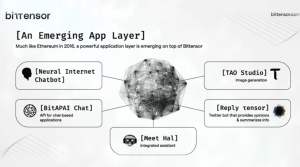

The advent of user-created subnets can be reminiscent of the explosion of applications on Ethereum once it opened its doors to the global developer community. This upgrade also underscores the potential of merging various tools and services into a cohesive network. In essence, every element required to forge intelligence is now housed under one roof, regulated by a singular token ($TAO).

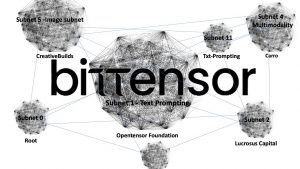

The route network serves as a pivotal component within the Bittensor ecosystem. It acts as a meta-subnet with the crucial role of distributing emissions across other subnets, all based on weighted consensus from key delegates. This shift is transformative in nature, as it fundamentally changes Bittensor from a single controlled system to a dynamic “network of networks.”

Crucially, emission schedules are no longer solely under the control of the Opentensor foundation. Delegates within the ‘root’ network now hold authority over incentive distribution. This shift decentralizes the control of incentives, removing sole reliance on any single entity and placing it in the hands of the ‘root’ network.

Subnets within the Bittensor network are self-contained incentive mechanisms that provide a framework for miners to engage with the platform. These subnets play a pivotal role in defining the protocols governing the interactions between miners and validators.

Furthermore, the specifics of incentive mechanisms are no longer hardcoded within the Bittensor codebase. Instead, these details are defined within the subnet repositories, allowing for greater flexibility and adaptability.

Bittensor introduces specific subnetworks, such as the prompting subnetwork and the time series subnetwork. The prompting subnetwork enables the execution of various prompt neural networks, including GPT-3, GPT-4, ChatGPT, and others, for decentralized inference. This functionality allows users to interact with Validators on the network and obtain outputs from the best-performing models, empowering their applications with advanced AI capabilities.

Subnets operate by distributing $TAO tokens to miners and validators based on the value they contribute to the network. The precise rules and protocols for miners’ responses to validator queries and the evaluation process conducted by validators are determined by the code within each subnet repository.

The Root Network serves as a “meta subnet” that operates above and influences other subnets while playing a pivotal role in determining emission scores across the entire system.

Its primary function is to employ a weighted consensus mechanism involving delegates to produce an emission vector for each subnet. Delegates within the ‘root’ network assign weights to different subnets based on their preferences, and a consensus mechanism ultimately determines the allocation of emissions.

One notable aspect is that the ‘root’ network effectively consolidates the roles of both the Senate and delegation mechanisms, bringing these functions together into a single entity. This consolidation streamlines decision-making processes within the Bittensor ecosystem.

The ‘root’ network possesses the authority to shape the ecosystem by influencing emission allocation. If it deems a subnet or a particular aspect of the system non-valuable, it has the capacity to reduce or eliminate emissions to that component.

Subnets within the Bittensor network must actively strive to attract the majority of weights from delegates within the ‘root’ network to secure a significant share of emissions. This competitive aspect underscores the importance of subnets in demonstrating their value and utility to the broader ecosystem.

Moreover, it empowers the top 12 keys within the network with the potential to veto proposals submitted by the triumvirate, adding an additional layer of governance and checks and balances to the system.

In the realm of technology, power has long been concentrated in the hands of a few tech giants. These giants have maintained control over valuable digital commodities that are essential for driving innovation. Bittensor, however, acknowledges and challenges this prevailing paradigm by introducing a more democratic and accessible system through its marketplace.

Bittensor’s fundamental insight lies in the understanding that intelligence is a result of various digital commodities, such as computing power and data. Historically, these commodities have been tightly controlled and restricted to the domain of tech giants. Bittensor seeks to break these chains by introducing user-created subnets. These markets will operate under a unified token system, ensuring that developers worldwide have equal access to resources that were previously the exclusive domain of a select few within the closed ecosystem of Big Tech.

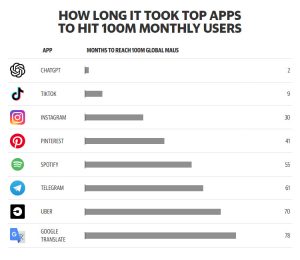

In today’s digital age, the transformative power of Artificial Intelligence (AI) is undeniable. AI has become an integral part of our lives, simplifying research, automating workflows, assisting in coding, and generating content from text. The rapid growth of AI capabilities is evident, but this growth comes with challenges related to scalability and, most importantly, reliability.

Recent incidents, such as ChatGPT’s temporary outage during discussions on AI regulations in Washington, have highlighted the critical need for robust solutions to address AI’s scaling challenges. These outages have left users concerned about the stability and dependability of AI as it becomes increasingly integrated into our daily lives. It is in moments like these that the significance of Bittensor’s $TAO becomes apparent.

Bittensor’s approach not only champions open-source AI but also demonstrates that it can be a financially rewarding pursuit. It mirrors the competitive evolution seen in Bitcoin mining and paves the way for a thriving marketplace where the best AI models rise to the forefront. This shift empowers AI researchers to contribute their expertise to an open and dynamic environment, ultimately benefiting society as a whole.

$TAO offers a decentralized AI infrastructure that can mitigate potential issues like the one experienced by ChatGPT. By decentralizing AI, Bittensor ensures the resilience and reliability of AI systems, even as their demand continues to grow. This approach establishes a dependable foundation for the future of AI services.

Simply put, Bittensor emerges as a global marketplace for open-source artificial intelligence, presenting a compelling solution to the challenges posed by closed-source AI development.

A significant consideration is the current state of AI, much of which remains locked behind closed doors and under the control of a few tech giants. This raises the question: what if AI could be open and learn from other AI models in a collaborative environment? Bittensor’s $TAO seeks to provide a solution to this question.

The debate surrounding whether AI models should be open source has gained prominence as concerns about the alignment problem in AI continue to grow. The fundamental question is whether the actual code behind AI models should be freely accessible to everyone. Interestingly, even if major players like OpenAI were to open source their models, it wouldn’t necessarily pose a threat to Bittensor. In an open-source environment, anyone could utilize these models on the Bittensor network.

Within the tech community, there is a divergence of opinions on this matter. Some argue that open-sourcing AI technology could empower malicious actors to exploit AI for harmful purposes. Conversely, others contend that granting exclusive rights to AI technologies to major corporations poses a more significant danger. For example, concentrating AI power in the hands of a few trillion-dollar corporations, as seen with OpenAI’s focus on raising substantial funds, could lead to ethical concerns, highlighting the risk of power corruption.

Meta’s decision to open-source their Llama2 LLM indicates a shift in the industry toward embracing open-source practices. This move provides an opportunity for Bittensor to learn from and potentially integrate Meta’s advancements into its network, closing the performance gap more rapidly.

It’s essential to examine the valuation of both $TAO and OpenAI. Presently, OpenAI holds a dominant position in the industry, with a valuation ranging between $80B and $90B. However, it operates within a closed ecosystem heavily reliant on Microsoft and its permissioned cloud services. Despite this, OpenAI has successfully attracted top talent from around the globe. On the other hand, as time progresses and open-source initiatives become more prevalent, the pool of available talent is poised to expand exponentially, reaching every corner of the internet. This democratization of AI expertise could play a crucial role in shaping the adoption of Bittensor.

Developer adoption remains a pivotal factor in Bittensor’s journey. Currently, developers can engage with the network through the Python API developed by the OpenTensor Foundation, underscoring the importance of fostering a robust developer community to drive adoption. Nowadays, Bittensor is actively working on decentralizing critical aspects of the network, such as model creation and training, rewarding the most finely-tuned models while fostering community-driven decision-making.

Interestingly, established players in the AI domain, including OpenAI and Google, have now become competitors of $TAO. They are deeply involved in the model generation stage of AI and have even ventured into potential vertical integrations within various industries. In this context, one of the primary challenges $TAO faces is the data divide problem.

Unlike tech giants like Facebook, Apple, Amazon, Netflix, and Google (FAANG), which have access to vast repositories of meaningful data, crowd-sourced communities may lack the same level of resources and data access. FAANG organizations are equipped with the financial means to power their AI endeavors with robust hardware like Nvidia’s cutting-edge technology, including H100s and GH200s, which can significantly accelerate AI model training.

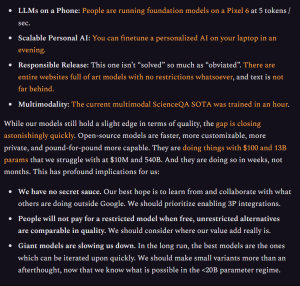

At the same time, it’s essential to note that all mainstream AI solutions today are characterized by being closed and centralized. This includes prominent companies like OpenAI, Google, Midjourney, and others, each offering disruptive AI solutions. However, the gap between closed and open-source models is rapidly narrowing. Open-source models are gaining ground in terms of speed, customization, privacy, and overall capability. They achieve impressive features with relatively modest budgets and parameter sizes compared to their closed counterparts. Moreover, these open-source models operate on an accelerated timeline, delivering results in weeks rather than months.

Google, a tech giant in its own right, has recognized this transformative trend. A leaked internal document from the company states, “We have no moat, and neither does OpenAI.” This acknowledgment underscores the rising influence of open-source AI in the competitive landscape.

In this evolving AI ecosystem, $TAO emerges as a catalyst for change, challenging the traditional model of AI development and training. Its decentralized approach and community-driven ethos position it as a contender in the dynamic arena where tech giants once reigned supreme.

Unlike centralized platforms that restrict access to a single AI model, Bittensor’s architecture provides permissionless access to intelligence. It serves as a one-stop shop for AI developers, offering all necessary computational resources while embracing external contributions. This inclusive model interconnects neural networks across the internet, creating a global, distributed, and incentive-driven machine learning system.

Realizing the full potential of AI demands a departure from closed-source development practices and their associated limitations. Just as children broaden their understanding through social interactions, AI flourishes in dynamic environments. Exposure to diverse datasets, insights from innovative researchers, and interactions with various models nurture the creation of more robust and intelligent AI systems. AI’s trajectory should not be dictated by a single entity.

In this starkly contrasting future, the choice between a world dominated by black-box algorithms and centralized authority and an open, democratized AI landscape becomes crucial for society.

In the first scenario, where mega-corporations like OpenAI or Anthropic hold the reins of AI solutions, we risk living under a constant surveillance regime. These corporations would possess immense power over our personal data and daily interactions, with the authority to shut off services and report individuals for dissenting views or discussions.

Elon Musk says that A.I. is ‘one of the biggest risks’ to civilization and needs to be regulated

He co-founded OpenAI

— Genevieve Roch-Decter, CFA (@GRDecter) February 15, 2023

However, the more optimistic alternative offers a world where AI is rooted in open-source platforms, built on universally-owned networks. Here, power and control are decentralized, and AI serves as a tool for empowerment rather than surveillance. In this scenario, creativity and development can thrive without the fear of corporate bias or censorship.

Just as the internet democratized access to information, an open AI ecosystem would democratize access to intelligence. It ensures that intelligence isn’t monopolized by a select few, promoting a level playing field where anyone can contribute, learn, and benefit.

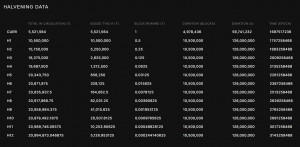

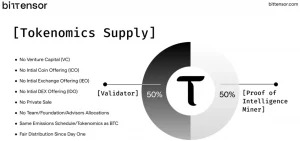

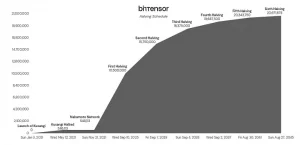

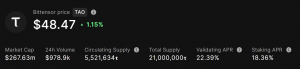

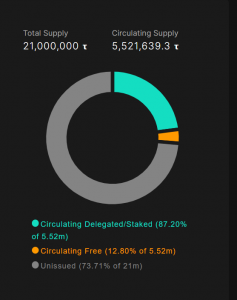

Another similarity with Bitcoin is that $TAO’s issuance schedule also follows the concept of the halving, which occurs approximately every 4 years. However, this is determined by the total token issuance rather than block number. For instance, once half of the total supply has been issued, the rate of issuance is halved.

Importantly, $TAO tokens used to recycle registrations are burned back into the unissued supply, leading to a gradual lengthening of the halving intervals. This mechanism ensures that the issuance schedule adjusts dynamically over time, reflecting the network’s needs and economic dynamics.

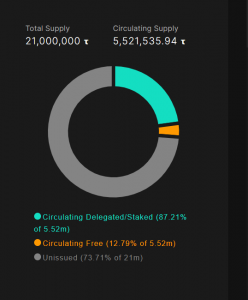

Bittensor’s $TAO token economy is characterized by its simplicity, commitment to decentralization, and fair distribution. Unlike many other blockchain projects, $TAO tokens have not been allocated to any party through ICOs, IDOs, private sales to VCs, or privileged allocations to the team, foundation, or advisors. Instead, every circulating token has to be earned through active participation in the network.

There are also capital allocators involved in the network, participating as miners or validators as well as providing market making services, such as DCG, GSR, or Polychain. What’s relevant is that none of them received a token allocation originating from a pre-sale or private sale.

The $TAO token can be used for governance, for staking and participating in the consensus mechanism, and as a means of payment within the Bittensor network.

This way, validators and miners stake their tokens as collateral to secure the network and earn rewards through inflationary emissions, while users and enterprises can use $TAO to access AI services and applications built on the network.

New $TAO tokens can only be produced through mining and validating. The network rewards both miners and validators, and each block grants 1 $TAO reward, shared equally between miners and validators. Hence, the only ways to acquire $TAO is by either purchasing tokens on the open market or engaging in mining and validation activities.

The straightforward token distribution model of $TAO reflects the principles of decentralization, reminiscent of Bitcoin’s ethos set by Satoshi Nakamoto. The genesis minting of $TAO aligns with Bitcoin’s emissions schedule ($BTC), providing an equal opportunity for anyone who contributes value to the network. This approach underscores the importance of preventing the concentration of power and ownership, particularly in the realm of AI, which has significant societal implications and should not be controlled by a select few.

This distribution model ensures that mining remains a competitive process. As more miners join the network, the competition increases, making it challenging to maintain profitability. This, in turn, motivates miners to find ways to reduce their operational costs, promoting efficiency and innovation within the network.

$TAO, the native token of the Bittensor network, derives its intrinsic value from its unique role in the ecosystem. Unlike the standard L1 model where network tokens derive their value from selling block space, the value of $TAO is tied to the AI services it enables. As these AI services become more impactful and useful, the demand for $TAO increases.

Holding $TAO grants access to a wide array of interconnected digital resources, including data, bandwidth, and intelligence generated and verified by network participants. As reflected by the emissions schedule, $TAO’s value is not solely based on speculation or scarcity but is deeply rooted in the tangible contributions and utility it provides within the Bittensor network.

However, maintaining this cycle of creation and reward isn’t guaranteed. Miners and validators, while contributing valuable intelligence to the network and earning $TAO tokens in return, also have an incentive to sell to cover expenses, similar to Bitcoin miners.

Like any other token, the price of $TAO is determined by the fundamental economic principles of supply and demand. Increased demand for $TAO results in price appreciation, while decreased demand leads to price depreciation. Hence, the idea is that demand from ecosystem activity will offset supply unlocks.

You can only get $TAO by contributing to the network. For that, you need to buy and hold or spend it to start using the network.

As the network expands and more AI models and subnets are added, the potential for value capture increases. The network’s growth is also fueled by the synergy between AI and blockchain, creating a self-reinforcing cycle.

This way, Bittensor embodies the principles of Metcalfe’s Law, where the value of a network is proportional to the square of the number of connected users or nodes. As more participants join the network, the value it offers increases exponentially.

In Bittensor, validators are incentivized to attract stake from token holders, and this stake is fundamental to their operation within the network. As a token holder you can choose a variety of different validators to stake your $TAO onto. The most common option is the OpenTensor Foundation itself, with about 20% of network ownership.

Currently, validators distribute 82% of their rewards to delegates in the form of $TAO tokens. As a consequence, delegating $TAO tokens to a validator presents an opportunity for token holders to earn staking rewards. This can help to protect users against the potential dilution from inflationary emissions.

When assessing the risk/reward of allocating part of the portfolio to $TAO, it is important to be aware of what you are actually buying. The purchase, for example, does not entitle the holder to any sort of yield paid out in USD generated from the economic activity of the network. Instead, you are rewarded with token emissions. As a token holder you could then delegate those emissions to earn an APY and increase your $TAO holdings.

The analogies with Bitcoin are clear, but there is an implicit story behind $BTC that makes it unique. Nobody can provide a satisfactory answer as to what is the value of $BTC or why it has any sort of value, hence why the community ends up embodying a tribalistic warfare between the no-coiners, the “shitcoiner”, and the maxis.

Indeed, the actual token economy of Bitcoin is simple to understand: $BTC is used to incentivize miners to operate and run the network. As a consequence, existing holders get diluted (although they can become miners – or delegates in the case of Bittensor). Hence, those who hold the token are not rewarded, and don’t receive any incentive from the underlying network.

But in the case of $BTC, however, there is an important factor to consider, and that is scarcity. The fact that there will only ever be $21M makes it unique. And while the token economy of $TAO has been modeled after Bitcoin itself,there are still more than 70% unissued tokens. This presents a dilemma for investors about what they value more: the decentralization of the network, or the scarcity of the asset.

In the end, the utility of $TAO derives from the access it provides to AI models, its use for governance, access to staking rewards, and as an incentive mechanism.

Current infrastructure developments are paid by the Opentensor Foundation through funding from delegation to them as well as by delegation rewards. Other developments are carried out by third parties who operate their own validators and are funded through delegation as well.

Just as any global initiative requires funding for research, development, and deployment, AI’s success hinges on how capital is coordinated and how stakeholders are rewarded for their contributions. It is this strategic allocation of resources (research, GPUs for training…) that drives AI’s growth and impact.

In the realm of AI, especially in the case of large language models like ChatGPT, operational costs are substantial. OpenAI, for instance, is estimated to spend approximately $700,000 per day to operate ChatGPT, which highlights the considerable financial burden associated with large-scale AI models. Training costs can range from millions to tens of millions of dollars for each model, making it an even more resource-intensive endeavor. The cost of training a model on a large dataset can be even higher, reaching up to $30 million.

While the company has raised substantial funding, including a recent investment from Microsoft (roughly half in the form of Azure credits), the growing costs of training large language models are a concern. Each training run costs millions, and the need to start from scratch for new models exacerbates this issue.

This is where Bittensor’s approach of “Knowledge Compounding” becomes relevant. Bittensor’s unique approach focuses on decentralization and collaboration through “Knowledge Compounding”. This philosophy allows AI systems to build upon existing knowledge in a decentralized manner, offering advantages such as:

Bittensor is an open-source protocol that powers a decentralized, blockchain-based machine learning network. The team behind Bittensor includes Jacob Steeves (Founder), Ala Shaabana (Founder), Jacqueline Dawn (Director of Marketing), and Saeideh Motlagh (Blockchain Architect) among others. The Opentensor Foundation also plans to expand their team this year.

There is a pseudonym called Yuma Rao which is also mentioned in Bittensor’s white paper, just like in Bitcoin Satoshi Nakamoto. It is not known if this person really exists and we may never know more about him or her.

Bittensor has not disclosed any notable advisors or key investors, other than receiving funding from the OpenTensor Foundation, which is a non-profit organization that supports the development of Bittensor. Bittensor has also not announced any official partnerships.

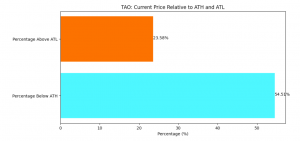

Most tech companies are far down from their pre-pandemic valuations, yet AI companies are now reaching ATHs in both valuation multiples and growth rate.

With a market capitalization significantly lower than industry giants, Bittensor might actually be the perfect playground for large-scale/high-demand AI applications and the use of open-source models.

Obviously the simplest comparison to measure upside is to compare with OpenAI’s private valuation at $29B. Realistic or not, this is slightly over 28x higher than $TAO’s FDV. Considering how long it will take for the entire supply to enter circulation, we can use the circulating market cap to come up with a ballpark figure where OpenAI’s private valuation is more than 108x $TAO’s market cap.

However, that’s a highly speculative approach that can be simplified as making a bet on projects that can benefit from sitting at the intersection of AI and crypto.

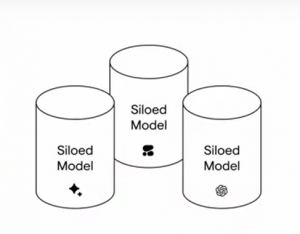

The most important feature to keep in mind is the fact Bittensor is tackling the centralization issue of AI. Right now, a small number of corporations control a minority of large and powerful models, but they are all siloed and there is barely any collaboration or knowledge sharing.

Siloed AI models cannot learn from each other, and are therefore non-compounding (researchers must start from scratch each time they create new models). This is in stark contrast to AI research, where new researchers can build on the work of past researchers, creating a compounding effect which supercharges idea development.

Siloed AI is also limited in functionality since third-party application & data integrations require permission from the model owner (in the form of technology partnerships & business agreements). This limitation directly affects the value and utility of AI, as it can only be as valuable as the range of applications it can effectively power.

|

|

This centralized and winner-takes-all environment is not beneficial for small teams with less resources. In this context, the core strength of Bittensor is their decentralized network and incentives mechanism to encourage small teams and researchers to monetize their work.

If Bittensor succeeds in narrowing the performance gap with leading closed-source AI providers like GPT-4, it could become the go-to choice for developers, businesses, and researchers in the crypto and AI space. Its open and collaborative nature positions it as an attractive alternative to closed ecosystems, potentially leading to significant adoption.

Ultimately, TAO’s valuation can either be derived from the network’s utility (economic activity built on top) or direct cash flow to the protocol.

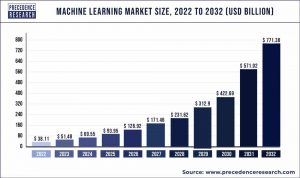

Since utility is more subjective and abstract to value, we can start off with cash flow. Assuming the ML market can reach a certain market size in the future (see Precedence Research estimates in the image below), we can value the Bittensor network based on its potential market share and revenue multiple.

Regardless of the estimated market size, Bittensor is still a highly specialized and complex project to understand, which is an impediment for easy on-boarding of developers and adoption by users.

The project is still on a very early stage of development as well, and there might be unexpected issues with the network. For instance, in June there was a collusion between miners that gamed in the network and caused $TAO to be sold on the market. The temporary fix was to bring emissions down by 90% in order to give extra time to the Opentensor Foundation to work on a solution to keep the network honest and allow the protocol to operate as intended.

Bittensor update

Good news, a group of colluding miners that gamed the network and sold their $TAO on the market has been identified

As a temporary solution, the TAO emission has been brought down by 90%, therefore giving the Opentensor Foundation time to work on a solution… pic.twitter.com/6svEvB3wth

— TAO-Validator.com (@TAO_Validator) June 15, 2023

The majority of the products that are live on the network currently cannot compete against centralized counterparties either, and so far have a low adoption rate. The best way to learn and try for yourself is to test the services offered on the Bittensor Hub.

We should also ask the question if Bitcoin tokenomics make sense for a network specialized on providing AI services like Bittensor. Perhaps the disinflationary nature of $BTC is not the best for a network that necessitates an increasing number of miners and applications built on top in order to scale. Ideally the token should inflate with the growth of adoption of the network, more akin to digital oil rather than digital gold. In some way this is already built-in, incentivizing miners to compete against each other and distributing the supply over the span of more than 200+ years.

Another challenge is privacy, due to the impossibility of encrypting data before it goes through the neural network. This is even more problematic in a decentralized setting, since any data that goes through the learning and/or inference process will definitely not be private. Granted this is a potential issue with centralized as well, but then you only have to worry about 1 known party seeing your data instead of an unknown many.

|

|

Bittensor can be a powerful bet on the intersection of AI and crypto. However, it is undoubtedly one of the most complex projects to evaluate its growth rate and potential upside.

There is clearly a lot of potential in a decentralized network to leverage the utility of AI, especially when incentivizing open source models and decentralizing the ownership of the network. However, services and business cases built on top of Bittensor are not competitive enough yet.

AI is also an industry that requires huge operating expenses and large amounts of funding that are only achievable by industry giants. Bittensor is a very contrarian bet in this sense, which is why it is worth considering as many risk/reward factors as possible.

Revelo Intel has never had a commercial relationship with Bittensor and this report was not paid for or commissioned in any way.

Members of the Revelo Intel team, including those directly involved in the analysis above, may have positions in the tokens discussed.

This content is provided for educational purposes only and does not constitute financial or investment advice. You should do your own research and only invest what you can afford to lose. Revelo Intel is a research platform and not an investment or financial advisor.